As artificial intelligence (AI) is constantly on the evolve, program code generation tools power by AI will be becoming increasingly complex and integral to be able to software development. These kinds of tools promise in order to streamline coding techniques, reduce human problem, and accelerate growth cycles. However, to ensure that AI code generators are reliable and produce high-quality code, a robust test plan is necessary. This article sets out the key pieces of a test strategy for AI signal generators, providing a comprehensive approach in order to evaluating their efficiency.

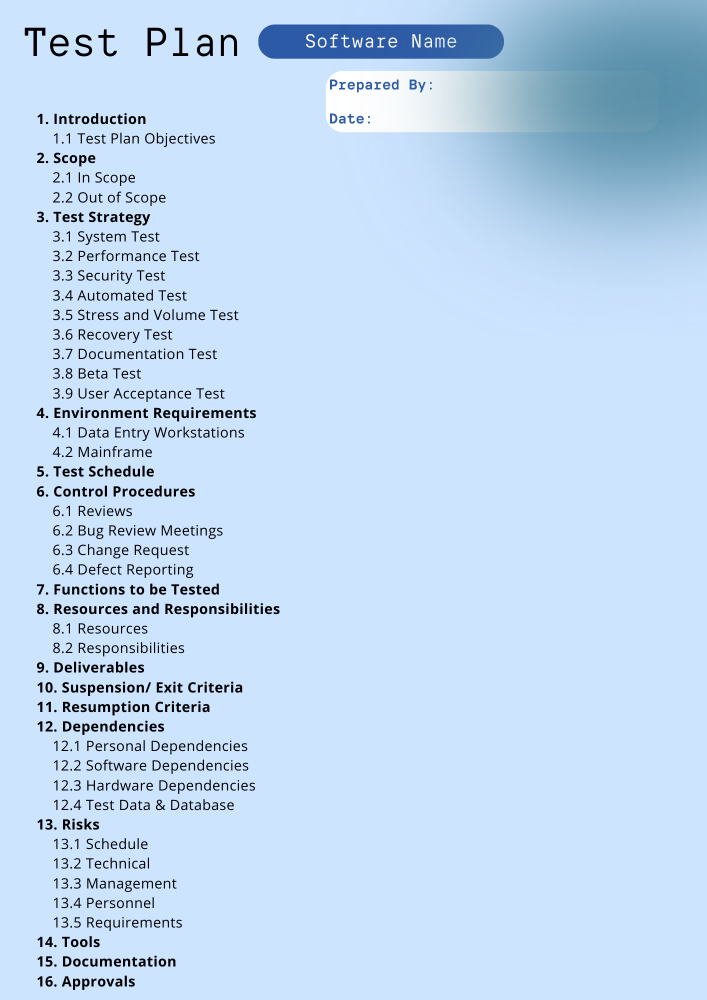

1. Defining Aims and Scope

The first step in developing a test out plan for AJE code generators is usually to clearly establish the objectives plus scope of tests. Objectives can include determining the accuracy, productivity, and reliability associated with the code produced by the AI. The scope ought to encompass various factors for instance code quality, adherence to technical specs, plus the ability to handle different encoding languages and environments.

Objectives

Accuracy: Guarantee the generated computer code meets functional requirements and performs effectively.

Efficiency: Evaluate the overall performance of the created code in conditions of execution moment and resource usage.

Reliability: Test the consistency and stability of the code across different situations and edge circumstances.

Range

Programming Dialects: Test code generators across different development languages.

Code Difficulty: Include simple, complicated, and highly elaborate code generation duties.

Integration: Assess just how well the developed code integrates with existing systems and even third-party libraries.

a couple of. Developing Test Circumstances

Test cases are crucial for validating the performance and quality of AI-generated code. Effective check cases should cover a wide variety of scenarios, which includes typical use instances, edge cases, and potential failure problems.

Types of Test out Cases

Functional Test out Cases: Verify that the code performs typically the intended functions correctly.

Performance Test Instances: Assess the efficiency with the code within terms of velocity and resource usage.

Integration Test Circumstances: Ensure that the particular generated code integrates seamlessly with various other systems and parts.

Security Test Instances: Evaluate the code for potential security vulnerabilities and compliance together with security standards.

several. Creating Test Information

Test data is definitely essential for simulating real-world scenarios plus ensuring that the particular AI code generator performs well underneath various conditions. The standard and diversity involving test data could significantly impact typically the effectiveness of therapy process.

Types involving Test Data

Valid Data: Data of which is expected to generate correct results.

Broken Data: Data that should cause the code to take care of errors or exceptions gracefully.

Edge Cases: Data that testing the boundaries regarding acceptable input and even behavior.

Real-World Files: Data that carefully mimics actual work with cases and situations.

4. Establishing Evaluation Metrics

Evaluation metrics are accustomed to assess typically the performance and high quality of the produced code. These metrics provide quantitative and qualitative insights into how well typically the AI code generator meets its objectives.

Key Metrics

Computer code Accuracy: Measure just how closely the developed code matches the particular intended requirements.

Setup Time: Evaluate the particular time taken regarding the code to be able to execute and finish its tasks.

Reference Utilization: Assess the particular code’s using computational resources, for instance recollection and CPU.

Problem Rate: Track the particular frequency and types of errors experienced during testing.

Maintainability: Evaluate how effortlessly the generated signal can be taken care of and updated.

five. Conducting Automated plus Manual Testing

Equally automated and manual testing play a new vital role within evaluating AI code generators. Automated screening can streamline the process and cover a diverse range of cases, while manual assessment provides deeper insights and catches issues that automated checks might miss.

Automated Testing

Test Software Tools: Utilize equipment that can automatically run test circumstances and analyze results.

Continuous Integration: Combine testing into the particular development pipeline intended for ongoing evaluation involving code quality.

Handbook Testing

Code Review: Conduct thorough reviews in the generated code to distinguish potential problems and improvements.

End user Testing: Involve end-users in testing to gather feedback in the functionality in addition to usability of typically the generated code.

six. Analyzing Test Outcomes and Reporting

Right after conducting tests, this is crucial to investigate the results and generate comprehensive reviews. These reports ought to highlight the overall performance from the AI code generator, identify any kind of issues or places for improvement, and even provide actionable ideas for enhancing the particular tool.

Reporting Elements

Test Summary: Provide an overview involving therapy process, including objectives, scope, in addition to methodologies.

Results: Current detailed results regarding each test case, including pass/fail reputation and any found issues.

Analysis: Offer an analysis associated with the results, featuring trends, patterns, in addition to areas of concern.

Recommendations: Provide tips for improving typically the AI code power generator based on typically the test findings.

several. Iterative Testing and Development

Testing is an iterative process, and continuous enhancement is essential intended for maintaining the quality of AI-generated program code. Regular testing plus updates ensure that typically the code generator advances with changing demands and technological developments.

Iterative Procedure

Feedback Loop: Incorporate comments from testing into the development method for ongoing improvement.

Updates and Enhancements: Regularly update the test plan and screening procedures to tackle new challenges and requirements.

Visit Website : Continuously monitor typically the performance of the AI code electrical generator and make changes as needed.

8. Ensuring Compliance in addition to Standards

Compliance with industry standards plus best practices is crucial for ensuring the quality and even reliability of AI-generated code. Adherence to coding standards, safety guidelines, and market regulations helps keep high-quality output.

Conformity Areas

Coding Specifications: Ensure that the particular generated code sticks to to established code conventions and criteria.

Security Standards: Validate that the code complies with security best techniques and addresses possible vulnerabilities.

Regulatory Compliance: Ensure compliance with appropriate regulations and suggestions for software advancement.

Conclusion

Testing AI code generators is usually a complex but essential process that will need a comprehensive in addition to well-structured test strategy. By defining crystal clear objectives, developing complete test cases, creating diverse test information, establishing evaluation metrics, and conducting each automated and manual testing, you could ensure that AJE code generators produce high-quality, reliable, and efficient code. Ongoing improvement and devotedness to industry requirements further enhance typically the effectiveness of the particular testing process, introducing the way for much more advanced and reliable AI-driven code generation tools.

Tests AI Code Generation devices: Essential Components of a Robust Test Plan

by

Tags:

Leave a Reply